About

Essence Neural Networks

About

Project Details

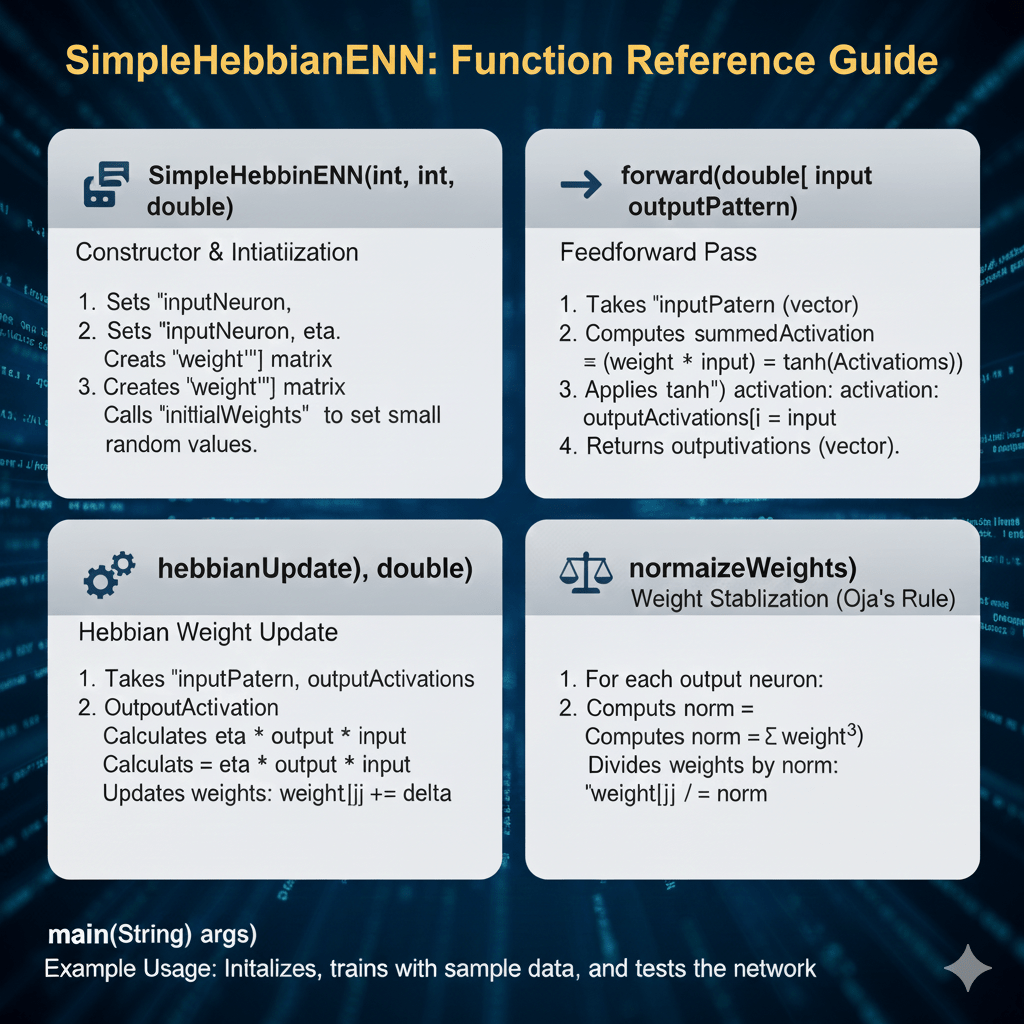

Essence Neural Networks are a lightweight framework that distills the essential structure of the model into a smaller, interpretable model. The unsupervised Hebbian network learns a single-layer representation of input arrays and synaptic weights are strengthened toward the test array, based on co-activation of input pixels with neuronal firing

unSupervised Hebbian

How the network Specializes on 1 input

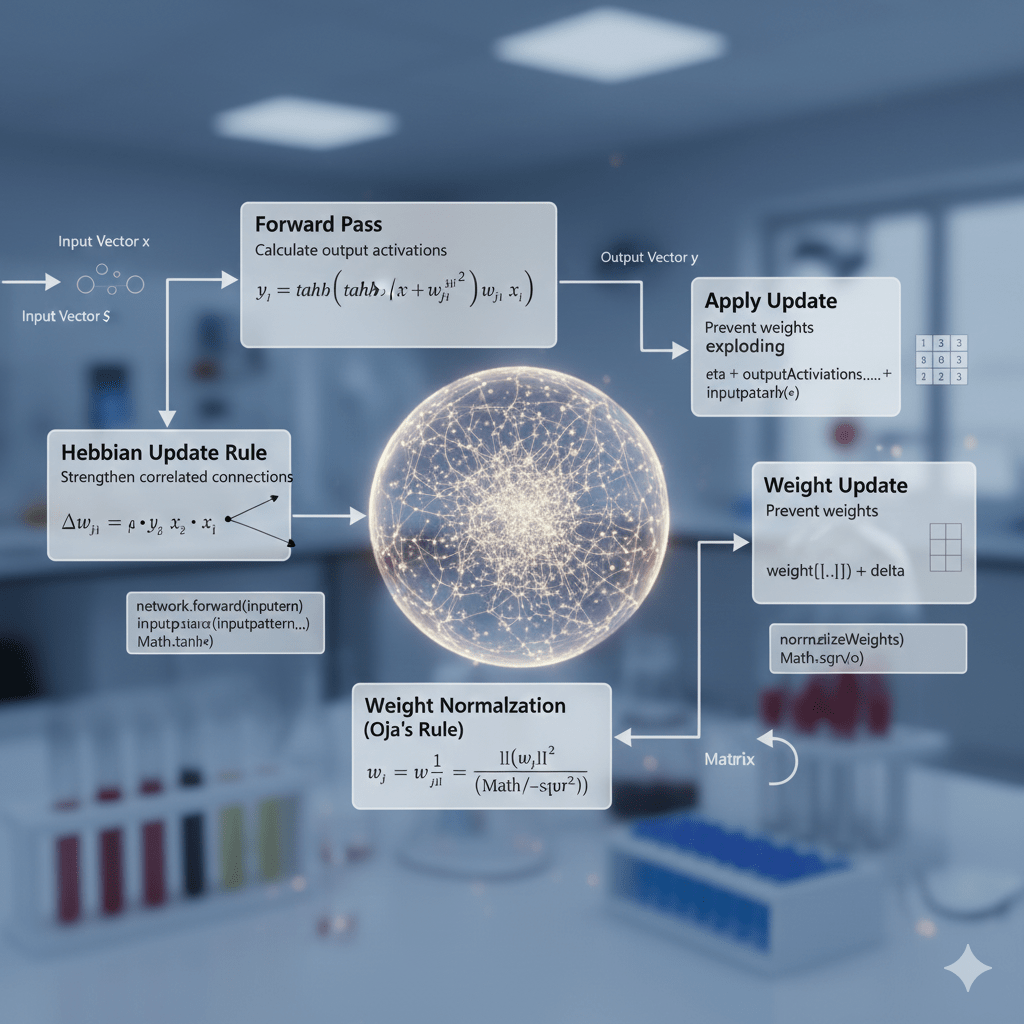

The network receives 3 inputs: {1, 0, -1}, {0, 1, 1}, {1, 1, 0} to train on and the neurons either fire in response to the test input or are strongly inhibited by the test input. Weights are initialized and the input pattern weight activations are summed and passed through an activation function.

Hebbian updates increase weights where active pixels align with the correct class and decrease the weights for activation in the wrong class.

Finally, weights are normalized and updated according to the hebbian rule, and the test outputs are calculated based on strength of activation to input

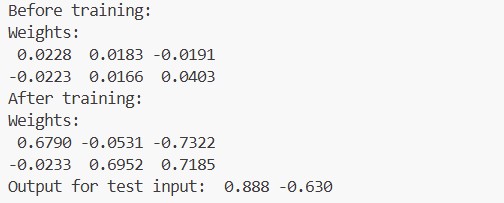

Initial input weights of 2 neurons before training on test input {1, 0, -1}. Neuron 1 fired strongly to the test input while the other neuron didn’t fire to the test input

Supervised Hebbian L vs T

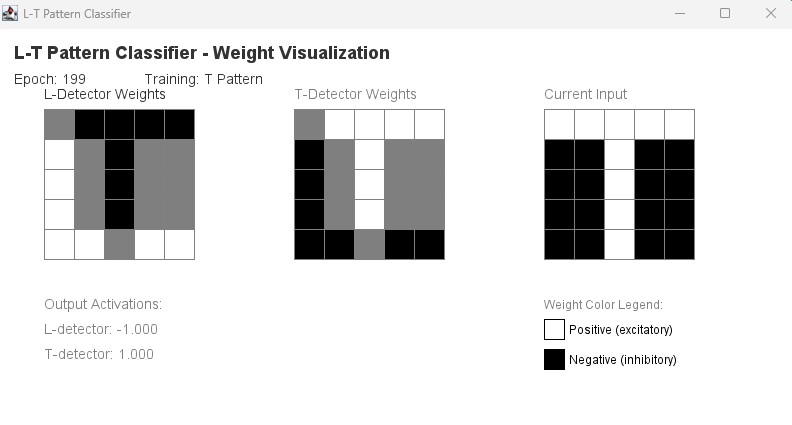

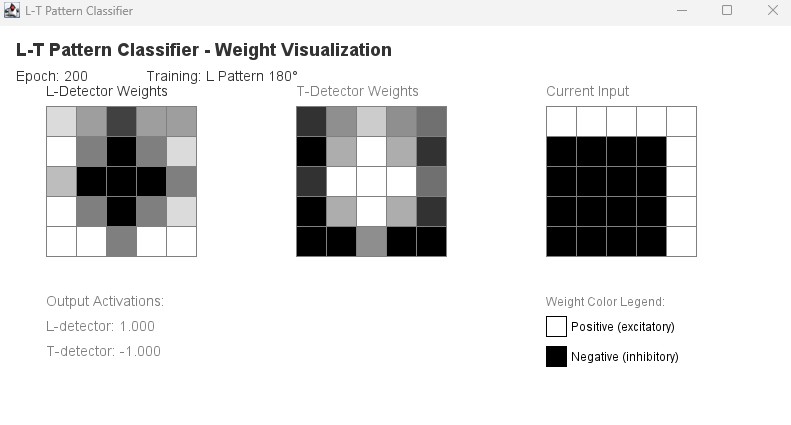

How the network learns L vs T

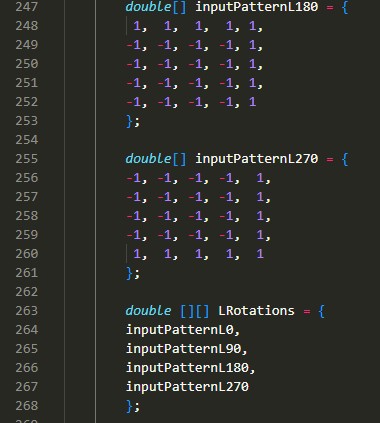

The network receives binary image inputs (arrays of 1 and -1) and a supervised label (L or T).

Hebbian updates increase weights where active pixels align with the correct class and decrease the weights when they fire for the wrong class.

While training, the L neuron becomes sensitive to vertical-plus-base patterns, while the T neuron emphasizes the horizontal bar with a central stem, enabling robust separation of the two shapes.